Custom Home Office File and Dev Server

I have built plenty of “servers” over the years. That is, home-built machines pieced together with consumer-grade hardware and packed with hard drives to serve files and other services across my home and small office networks.

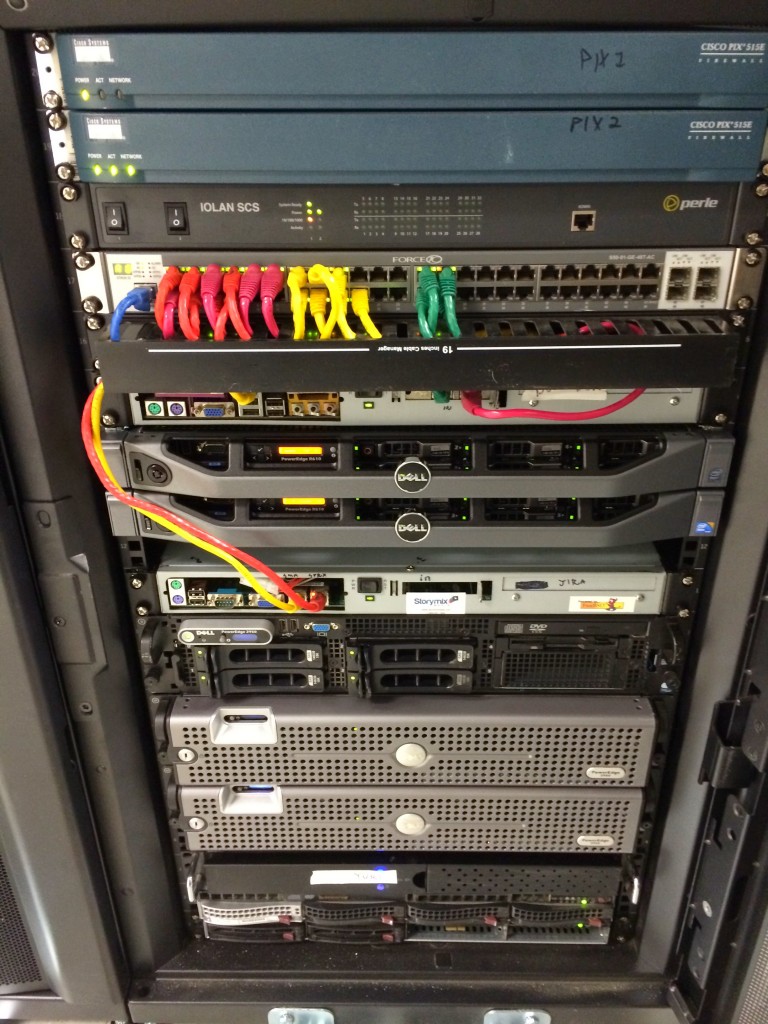

Typical half-cabinet deployment at a data center

My first file server build was for a vast LAN I created at my apartment complex while attending college in northern Idaho. I was 19 and insanely excited about LAN gaming, internet sharing (NAT), and general file sharing among my many connected neighbors. That ultimately became The Time I Ran an Unlicensed ISP From My Apartment, which included email and web hosting and was how I became involved in linux/BSD system administration and web development. But that’s another story entirely, to be covered in a future post.

When I founded Sprux LLC, a private IT consulting business, I quickly found myself providing all kinds of internet hosting services for my clients. That sort of thing has always involved colocation space and actual enterprise-level servers (I’m a fan of the Dell PowerEdge line), but there is always a need for a big ol’ file server at the office for storing stuff I need quick access to, hosting off-site backups, and of course holding music and video collections (all work-related, naturally). While I used to exclusively run software RAID (gmirror in FreeBSD and md in linux) for my office file servers – primarily due to budget constraints – as I began making heavier use of virtualization for development work I switched to hardware RAID so I could run everything in my office off one central server. By utilizing a dedicated RAID controller I would have the CPU and RAM needed to run virtual machines and other resource-hungry applications.

Consolidation

In 2011 I became absolutely fed up with the two 1U Supermicro machines sitting under my desk pumping out a half kilowatt of heat and deafening levels of fan noise. So I set out to replace them with a single box that would use less energy – thus producing less heat – and be near-silent, while also greatly increasing my on-site storage capacity. I would be replacing multiple dedicated development machines (one of which was an old laptop buried somewhere beneath my desk) with one big “server” running a hypervisor for my various development and testing needs, so I began researching hardware RAID solutions and ultimately settled on the relatively affordable 3Ware 9690SA-8I controller. It supports up to eight SAS or SATA drives and can be connected to a BBU for safe write-caching, which is a big deal when it comes to hardware RAID performance.

The entire build was focused on maximizing disk space, being energy efficient, and staying as quiet as possible despite cooling 10 hard drives. Here are the components I selected, though some of them are no longer available (like the tower case, unfortunately):

- NZXT WHISPER Classic Series Full Tower Case (has sound deadening material and large fans to minimize noise)

- iStartUSA BPN-DE340SS-BLACK SAS/SATA Trayless hot-swap cage (these things are amazing!)

- 600W Gold Certified PSU

- 8x WD RE4 2TB SATA hard drives (though today I would go with the WD Reds)

- 3Ware 9690SA-8I RAID controller w/ BBU (these are super affordable now on eBay)

- Intel Core i5 65W CPU (newer version here)

- Asus ATX motherboard – can’t find receipt and unsure of the model

- 16GB of RAM (I just bought whatever was cheap – it’s all the same to me if it’s a major brand)

I also bought two extra 2TB drives so I could quickly replace any failed units, but the machine has been running 24/7 for nearly 4 years now and hasn’t had any issues with the RAID drives or controller. I used two 500GB Seagate SATA drives in a RAID-1 config for the OS volume (linux software RAID). When I was ready to actually put the whole thing together, I decided to create a time-lapse video of the build using my trusty Nikon DSLR and a handful of ffmpeg, convert, and bash commands.

The Build

Once the build was complete I created a RAID-6 volume using the eight 2TB drives connected to the 3Ware controller, giving me ~12TB of raw disk space with two parity drives, meaning up to two physical disks can fail and the array will continue to function without any data loss. I installed CentOS and created an ext4 filesystem on the RAID volume, which resulted in just over 11TB of usable space, which I mounted at /export. I then created various folders under the /export mount point, such as home, vms (for storing my virtual machines), backups, and various folders for business-specific needs. Before creating any user accounts on the system, I deleted /home and symlinked it to /export/home/, since I wanted to ensure my user home folders were on the highly redundant RAID volume.

Network Services

File Sharing

My home office includes workstations and laptops running various operating systems – Linux, Windows, and OS X all needed to be able to access the shared data on this server. Samba to the rescue! When configured correctly, Samba can easily share folders and files to all the previously-mentioned OSes. Thanks to the RAID controller (with write-cache enabled), we regularly saturate the 1Gbps wired connection on the server when both reading and writing large amounts of data over the network.

NAT, DHCP, DNS

I love my MikeroTik router!

Before I acquired and properly configured a dedicated router, this machine was also doing NAT and DHCP for the entire LAN, acting as the network’s gateway to the internet, but this was problematic when I needed to reboot the server for kernel updates or other maintenance (in a home office environment, every computer needs to be powered down and cleaned out periodically). Since deploying a well-configured MikroTik RouterBOARD RB/2011UAS-2HnD-IN, the entire network has been far more stable and even has dynamic DNS based on DHCP leases configured, which seems to have improved overall network performance, especially when accessing samba shares with Macs and linux machines. While the MikroTik device has some limitations and takes a bit of work to configure properly, overall I am extremely pleased with what it has done at both my home and work networks. It’s great for all kinds of routing, NAT, and basic network services instead of having a dedicated server for those jobs, a cheap consumer-grade solution, or an expensive enterprise device.

VPN

I frequently have the need to access my home office while I’m traveling or dealing with a client emergency when away from home. So the server acts as a VPN endpoint and does basic routing between various subnets. This works brilliantly, especially with a little help from the MikroTik router which keeps track of routes between the VPN subnets. The MikroTik device can act as a VPN endpoint, however I found that its CPU is not quite powerful enough to handle encrypted VPN connections, resulting in poor network throughput.

Off-site Backups

Nightly pull-style incremental differential backups keep critical files, databases, and other important stuff hosted on various colocated internet servers backed up in case of some kind of catastrophe at the data center. I use rdiff-backup for this, and frankly I think it’s brilliant. Doing a total restore of, say, a busy web server hosting many gigs of data from my home server is not ideal, which is why I also have a dedicated on-site backup solution in place at the colo. But it would certainly be a life-saver if something terrible happened in my colo rack and I lost everything, including the on-site backups.

Final Thoughts

My current desktop workstation is actually an enterprise tower server

In the end, I managed to build a versatile and highly capable server for my home office with just under 12TB of high-performance redundant storage for slightly less than $3000. If you were to build this exact machine today, it would cost closer to $2000 (maybe even less), since 2TB drives, RAID controllers, and just about every other component have dropped in price substantially.

The only issues I have had with this configuration are some oddness with the RAID controller where it reports drive timeouts, though this never seems to affect performance; and one of my 500GB drives died, which was a simple replacement. Oh and I have had to replace the RAID battery once, which isn’t surprising after 3+ years of operation. For future builds, if I absolutely must have hardware RAID, I would look for a different controller. The 9690SA has performed well, but the warnings it spits out and its overall age do make me just a bit nervous.

Having operated enterprise servers deployed at datacenters for a surprising number of years without any major hardware failures, and even to this day being responsible for the maintenance and upkeep of servers which are heavily used and, in some cases, 10 years old, it’s nice to know one can build a reliable home server with great specs – and tons of disk space – on a relatively limited budget. That said, I’ll always opt for the enterprise solution when possible. Last year, when my five year old desktop workstation could no longer keep up with the demands of my daily work, I replaced it with a Dell T710, which is a PowerEdge server in a tower case. It has dual quad-core Xeon CPUs, 72GB of RAM and 15k SAS disks on a hardware RAID controller (RAID-10 in this case). I bought it used for about $2000, added a modern video card, and expect it to last at least five years without even having to think about it. It takes up a lot of space on my desk, but I couldn’t put together anything near the specs it has using consumer hardware without spending at least $1000 more, and even that wouldn’t get me the 15k SAS drives :).